It’s happened again.

This time it is Facebook who left an Amazon S3 Bucket with publicly (anonymously) accessible data. 540 million breached records.

Previously, Verizon, PicketiNet, GoDaddy, Booz Allen Hamilton, Dow Jones, WWE, Time Warner, Pentagon, Accenture, and more. Large, presumably trusted names.

Let’s start with the truth: objects (files, data) uploaded to S3, with no options set on the bucket or object, are private by default.

Someone has to either set a Bucket Policy to make objects anonymously accessible, or set each object as Public ACL for objects to be shared.

Lets be clear.

These breaches are the result of someone uploading data and setting the acl:public-read, or editing a Bucket’s overriding resource policy to facilittate anonymous public access.

Having S3 accessible via authenticated http(s) is great. Having it available directly via anonymous http(s) is not, but historically that was a valid use case.

This week I have updated a client’s account, that serves a static web site hosted in S3, to have the master “Block Public Access” enabled on their entire AWS account. And I sleep easier. Their service experienced no downtime in the swap, no significant increase in cost, and the CloudFront caching CDN cannot be randomly side-stepped with requests to the S3 bucket.

Serving from S3 is terrible

So when you set an object public it can be fetched from S3 with no authentication. It can also be served over unencrypted HTTP (which is a terrible idea).

When hitting the S3 endpoint, the TLS certificate used matches the S3 endpoint hostname, which is something like s3.ap-southeast-2.amazonaws.com. Now that hostname probably has nothing to do with your business brand name, and something like files.mycompany.com may at least give some indication of affiliation of the data with your brand. But with the S3 endpoint, you have no choice.

Ignoring the unencrypted HTTP; the S3 endpoint TLS configuration for HTTPS is also rather loosely curated, as it is a public, shared endpoint with over a decade of backwards compatibility to deal with. TLS 1.0 is still enabled, which would be a breach of PCI DSS 3.2 (and TLS 1.1 is there too, which IMHO is next to useless).

Its worth noting that there are dual-stack IPv4 and IPv6 endpoints, such as s3.dualstack.ap-southeast-2.amazonaws.com.

So how can we fix this?

CloudFront + Origin Access Identity

CloudFront allows us to select a TLS policy, pre-defined by AWS, but permitting us to restrict available protocols and ciphers. This lets us remove “early crypto” and be TLS 1.2 only.

CloudFront also permits us to use a customer specific name, for SNI enabled clients for no additional cost, or a dedicated IP address (not worth it, IMHO).

Origin Access Identities give CloudFront a rolling API keypair that the service can use to access S3. Your S3 bucket then has a policy permitting this Identity access to the host.

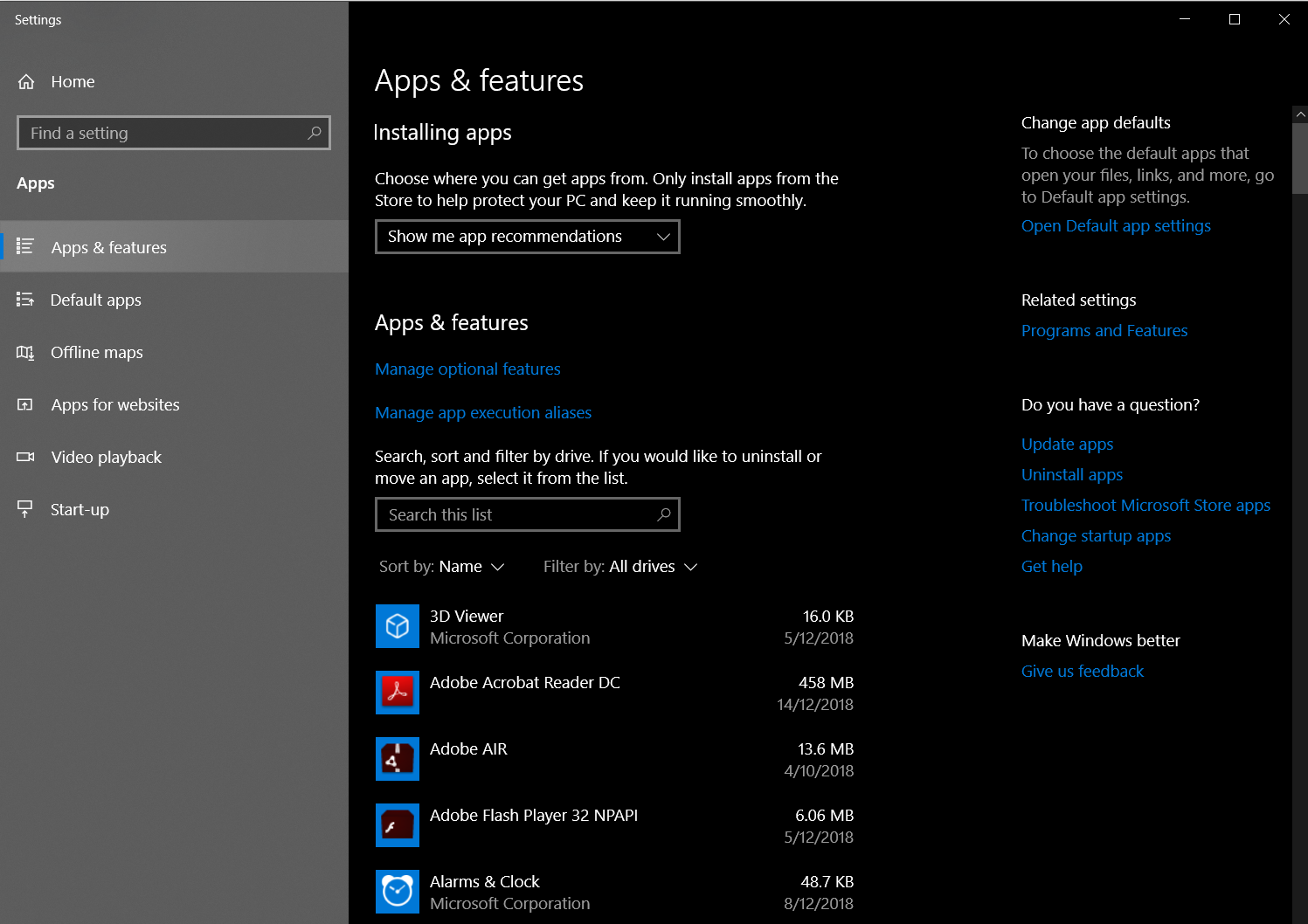

With this access in place, you can then flick the “Block Public Access” setting account-wide, possibly on the bucket first, then the account-wide settings last.

One thing to work out is your use of URLs ending in “/”. Using Lambda@edge, we convert these to a request for “/index.html”. Similaly URL paths that end in “/foo” with no typical suffix get mapped to “/foo/index.html”.

Governance FTW?

So, have you checked if Block Public Access is enabled in your account(s). How about a sweep through right now?

If you’re not sure about this, contact me.