DNS is the fundamental directory service of the Internet, and these days, of all corporate systems performing discovery of their various components in digital deployments. It is used at least hundreds of times per day per device, and no one really notices until it breaks.

For the most part, its what changes the hostname you have used to navigate to this article – blog.james.rcpt.to – into an IP address of some sort, the numbered address that is supposed to uniquely identify the server or load balancer that is connected to the public Internet to which your browser will then make a network connection.

If your DNS breaks, then your service, or perhaps your entire company, is “off the air”, no longer discoverable by clients wishing to use human-readable labels and names to find your address.

I recently gave a 45 minute presentation at the AWS User Group in Perth, Western Australia, highlighting some of the advantages of using Route53 for DNS, an some of the more modern security protections therein; slides can be found here.

I have helped various organisations transition their authoritative DNS from their existing services to AWS Route53. This migration, in isolation, requires coordination, preparedness, and awareness of the impact of poor planning, and opportunity for service improvement.

DNS Migration Planning

DNS manages to serve the scale of the planet by effective use of caching. This is done at multiple layers in the service.

When a DNS service does a looking for a query it has not already cached (or has expired from cache), it must start a recursive resolve. For this to happen, it needs to find the Authoritative Name Server for a Zone. This Authoritative Name Server will be delegated down from a parent domain, and so on, until we reach the top of the DNS tree.

A common example in this scenario is the address “www.example.com”. Before answering the address for this exact name, a client must determine the address of the Authoritative Name Server(s) for “example.com”. If this is unknown, then the client must ask the address of the Authoritative Name Server(s) for “.com”. Again, if “.com” Name Servers are not already known (cached), then the level above that must be queried, commonly called the Public DNS Roots, or “.”.

The Global Root DNS Servers

These DNS roots are globally agreed, and every DNS server is given a (relatively) static file of the addresses for these. They are given generic names, A-M, and are operated by a variety of organisations who agree to share the same data for the 1000+ Top Level Domains under the globally agreed root.

These Root DNS Servers are all accessible by both the existing IPv4 address scheme, and the newer IPv6 Internet. These addresses exist in a file commonly called the “root.hints” file and is distributed with all DNS server software as the initial glue. It rarely changes.

In a trick of Internet routing (BGP), many of these 13 hosts (A-M) are also each replicated multiple times by a process called Anycast: the same small address segment that the server lives on is “announced” to the world from multiple locations, and a duplicate server, performing the same process, and responding with the same answers.

This first layer of scalability helps the root servers deal with billions of devices using the DNS service every second.

The Global Top Level Domains (TLDs): Registry Operator and Registrars

Each of the global TLDS are operated by a Registry Operator, but records are added and removed by multiple Registrar organisations. For example, the “.com” zone is operated by Verisign, but there are many Registrar organisations you can obtain a DNS name from, amongst which is Route53 itself.

These operators have a selection of innovations and policies they apply to their delegation. Some operate their service with just IPv4, and some are dual-stack IPv4 and IPv6. Some operators have their DNS zones cryptographically signed (using DNSSEC) to provide some validation of the DNS queries.

Route53

Starting in December 2010, Route53 originally provided support only for hosting DNS Zones for customers. The engineering for the service at that time was designed to eclipse what most organisations had in place, providing higher reliability and scalability.

Back in the day, the authoritative references on running DNS services were the Bind Operators Guide (aka The BOG), and the O’Reilly book by author Cricket Liu. Most organisations organised just two DNS servers to respond to their customers queries, and the most common software for doing so was ISC’s Berkeley Interned Name Daemon, or BIND.

Of course, to have your own DNS server, you need a fixed IP address that your server would operator from, as this IP address is what the upstream zone would respond with to clients. And thus the initial problem for most organisations was getting a pool of static IP addresses.

Most ISPs only hand out dynamic addresses, and charge substantially more for static routes. Other (typically larger) organisations went through a laborious process of having IP addresses themselves assigned to their organisation (through ARIN, APNIC, or other IP address registries), and then deploying BGP to announce their range to their connected ISP(s) – could be multiple.

This overhead of assigned IP address ranges, setting up corporate BGP (and trying to secure it) all went away with the launch of hosted DNS services, and Route53 turned out to be one of the most well engineered and cost-effective solutions.

With Zones hosted we can delegate from the parent domain to the name servers that Route53 provides us; each individual record (e.g., “www”) can then point to any IP address (i.e., anywhere). The entire need for corporations acquiring large blocks of IP addressing for their organisation went away.

Indeed, I have helped organisations who previously had very large, fixed blocks of IPv4 addresses to relinquish some of these in a commercial market (for millions of dollars).

Route53 has expanded its remit in the AWS Cloud environment. In addition to hosting authoritative DNS zones, it also offers Registrar Services for hundreds of domains, as well as tuning the use of DNS with in the Virtual Private Cloud Environment. Each of these functions can be used completely separately for example, you can:

- Register a domain with Route53 to handle the re-registration, but delegate to your own (or a 3rd party) DNS servers.

- Register a domain with another Registrar (e.g., Go Daddy, Verisign) and delegate to Route53 Hosted Zone.

- Configure complex routing and protection mechanisms for your Virtual Cloud Environment.

- Host private DNS for your VPC, invisible to the outside world.

In this article, we are going to concentrate on running Public DNS Zones, and the protections you can put in place.

Route53 Public DNS Zone Hosting: Scalability

By default, Route53 gives the operator a choice of 4 DNS servers to pass to the parent domain for delegation. Each of the 4 names are themselves given DNS Names, from four different TLDs. The Four names also themselves resolved to both IPv4 and IPv6 addresses.

The parent domain and then record (and cache) the delegation addresses of these 4 DNS server endpoints; and can instruct end clients doing lookups to also cache this delegation.

Each of those four endpoint addresses are also potentially themselves Anycast announced from multiple locations worldwide. This helps clients reach the closest deployed endpoint for each of the four names, reducing DNS latency.

This set of 4 DNS servers provides much greater reliability than the traditional two, and the multiple anycast presentation further improves this. The chance of any other AWS Route53 customer having the same set of 4 DNS server endpoints is very small, so any Denial of service on specific set of delegated IP address for another Zone is unlikely to affect your zone significantly. This is part of the reason why Route53 offers a 100% availability Service Level Agreement (SLA).

(Note, the control plane, for providing updates to records, is not covered by this SLA)

Route53: Questions

A number of configuration questions arise when planning the migration:

- do you want query logging turned on?

- this is delivered to an S3 bucket: what’s the retention policy on this (look at S3 life cycle policies) – always set an automated time to delete, perhaps in 12 months?

- what’s the analytics processing done on this data, if any?

- who has access to this log data, is the bucket marked private, default encryption, versioning?

- do you want DNSSEC enabled on the zone –perhaps do this after service migration if you don’t currently have DNSSEC enabled.

- What integrations for automated updates are in place, if any?

- Who needs access to the console to see and/or update records?

Route53: Migration

The process for migrating to Route53 is relatively simple:

- Reduce the parent domains Cache time (TTL – time to live; see below) for the delegation records that point at your current service: a value of 300 seconds may be reasonable.

- Prepare the DNS Export form the older service; review the records in there before doing a test import into the new service to ensure that no records cause any issue. This is perfectly safe as we have not redelegated yet. You should also review the individual records’ TTL values, and potentially reduce them down as well as part of the export/import. Any web site or load balancer should run with a TTL no higher than 300 seconds. Once the export/import has been successful, then delete the imported records – we will take a fresh update later…

- Determine if there are any processes that are automatically updating your DNS. These will have to be integrated to call the Route53 API for those updates after the migration.

- Ensure you have access to redelegate with the Registrar. Test username & password to log in.

- Schedule a time for the transition, during which we will avoid updates, or update both old and new. You will have to wait for 5 periods of the previous original TTL time to pass since step 1. For example, if the Delegation TTL was a week, then wait 5 weeks before proceeding (see below on TTL)!

- At the agreed time:

- Record the current (old) delegation IP addresses the registrar has configured.

- re-export the values

- update the record TTLs in the export.

- import into the Route53.

- update the Registrar with the four new Delegation address.

- test the DNS immediately; if it fails, revert the Delegation to the addresses in step (a) above.

- watch logs/metrics from other systems that rely upon this domain name, such as Web traffic for the zone, or mail traffic.

- test the DNS again after 25 minutes.

DNS Caching: TTL & Delegation records

A key element of DNS’s scalability is caching as much as possible. Records all have a customer defined Time To Live value, the duration (in seconds) that a record can be kept by a client.

When making changes to records, we typically observe 50% of clients seeing cached values updated after the TTL period; a further 50% of the remainder see the update after another TTL period; in practice, the TTL almost acts like a half-life:

| Duration | Cumulative % clients seeing update after this time |

| 1 * TTL | 50% |

| 2 * TTL | 75% |

| 3 * TTL | 87.5% |

| 4 * TTL | 93.75% |

| 5 * TTL | 96.875% |

| 6 * TTL | 98.4375% |

We would typically wait at least 5 periods of the time-to-live value on a delegation before making a change. As many organisations have this value set to a week (or a month), this could take some time; we’d also recommend keeping this value relatively small once migrated, to ensure you have flexibility in re-delegation in future. 24 hours is a reasonable time for delegation records, unless you’re about to do a migration, in which case 300 seconds is reasonable (25 minutes for 96% of clients to see the update).

During this period, however, you could have your new DNS zone hosting the identical records of the old one, and any updates during this period should be applied to both (or updates can be avoided during this window).

However, some network operators chose to override values as they see fit. A certain ISP in Australia would not honour any small TTL values, and this would result in at least a 24-hour TTL being enforced. Given the 5-TTL-period duration to get 96% of clients seeing the update, you may have to adjust your time frame to accommodate a parallel run of old and new DNS service. Unfortunately, you cannot force update this ISPs.

Route53: Records to Add and Modify

Route53 will automatically create a Start of Authority (SOA) record for your zone. This standard record type has two fields of interest: the RNAME (the responsible person’s email address), and the default TTL value (used for Negative DNS responses, when a query tries to find something not defined. You can leave these as the default, but if you adjust them, then the RNAME field must point to a monitored mailbox, and the TTL adjustment may result in higher query traffic.

Outside of the SOA, there’s a number of other DNS records you should put in place:

- SPF on the apex, now implemented as a Text (TXT) record, to indicate where your Email is permitted to originate from (low volume lookup traffic). Something like “v=spf1 mx -all” may do. You should set a different record even if your domain is not hosting email, to indicate that all fraudulently generated email from it is SPAM.

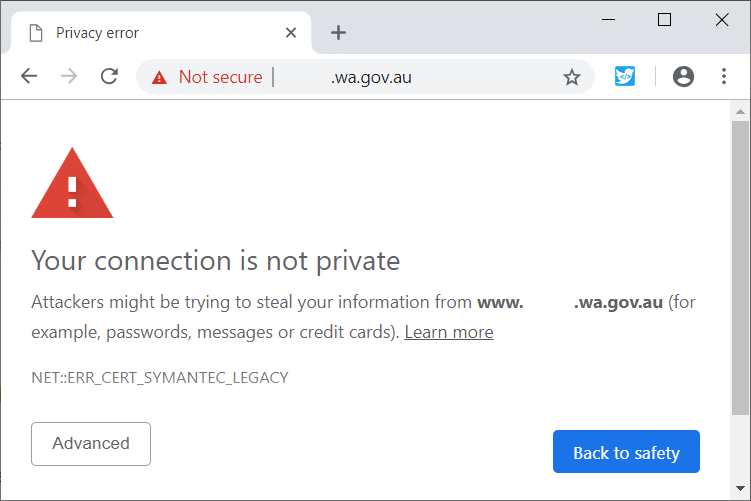

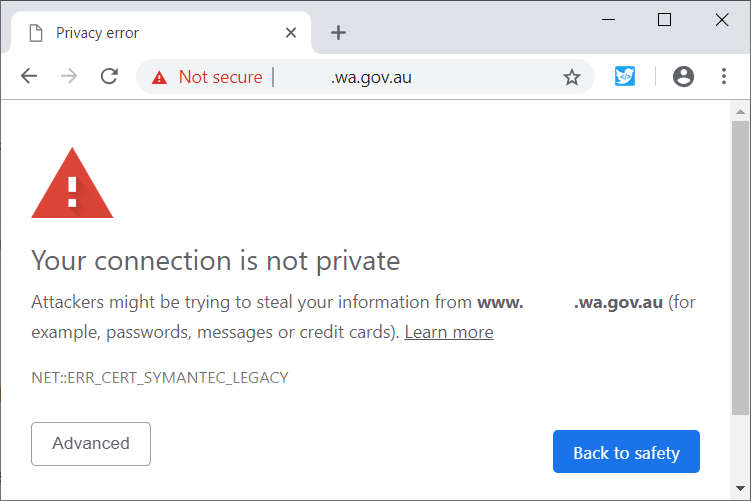

- CAA on the apex, to indicate to Certificate Authorities, who your permitted CAs for your domain are (extremely low volume lookup traffic). Something like:

0 issue “letsencrypt.org”

0 issue “amazon.com”

may do. - DMARC: a TXT record on hostname _dmarc.yourdomain, with value “v=DMARC1; p=quarantine; rua=mailto:youremail@yourdomain”

- SMT TLS Reporting: a TXT record on the hostname _smtp._tls.yourdomain with value v=TLSRPTv1;rua=mailto:youremail@yourdomain”

- An MTA (Mail Transfer Agent) STS (Strict Transport Security) record: a TEXT record for hostname _mta-sts.yourdomain with value “v=STSv1; id=2021021000;” – the number can be a representation of the current datetime in yyyymmddhhmm that can be incremented. You should also set up a static web site to host your MTA STS policy document itself on https://mta-sts.yourdomain/.well-known/mta-sts.txt.

For checking this domain’s security configuration, have a look at Hardenize.com, by Ivan Risti?.

Post-Migration

Ensure that all administrative staff have access to set and update the records they need.

Lastly, don’t forget to decommission the existing DNS service once you are convinced you do not need to go back to it.