Trying to fetch all the RDS CA certificates as a bundle, and inspect them:

#!/usr/bin/python3

# vim: tabstop=8 expandtab shiftwidth=4 softtabstop=4

import urllib.request

import re

from OpenSSL import crypto

from datetime import datetime

def get_certs():

url = ("https://s3.amazonaws.com/rds-downloads/"

"rds-combined-ca-bundle.pem")

with urllib.request.urlopen(url=url) as f:

pem_certs = []

current_cert = ''

for line in f.read().decode('utf-8').splitlines():

current_cert = current_cert + line + "\n"

if re.match("^-----END CERTIFICATE-----", line):

pem_certs.append(current_cert)

current_cert = ""

return pem_certs

def validate_certs(certs):

ca = None

for cert_pem in certs:

cert = crypto.load_certificate(crypto.FILETYPE_PEM, cert_pem)

if cert.get_issuer().CN == cert.get_subject().CN:

ca = cert

for cert_pem in certs:

cert = crypto.load_certificate(crypto.FILETYPE_PEM, cert_pem)

start_time = datetime.strptime(

cert.get_notBefore().decode('utf-8')[0:14], "%Y%m%d%H%M%S")

end_time = datetime.strptime(

cert.get_notAfter().decode('utf-8')[0:14], "%Y%m%d%H%M%S")

print("%s: %s (#%s) exp %s" %

(cert.get_issuer().CN, cert.get_subject().CN,

cert.get_serial_number(), end_time))

if end_time < datetime.now():

print("EXPIRED: %s on %s" % (cert.get_subject().CN,

cert.get_notAfter()))

if start_time > datetime.now():

print("NOT YET ACTIVE: %s on %s" % (cert.get_subject().CN,

cert.get_notBefore()))

return

pem_certs = get_certs()

validate_certs(pem_certs)

Output

Today this gives me::

Amazon RDS Root CA: Amazon RDS Root CA (#66) exp 2020-03-05 09:11:31 Amazon RDS Root CA: Amazon RDS ap-northeast-1 CA (#68) exp 2020-03-05 22:03:06 Amazon RDS Root CA: Amazon RDS ap-southeast-1 CA (#69) exp 2020-03-05 22:03:19 Amazon RDS Root CA: Amazon RDS ap-southeast-2 CA (#70) exp 2020-03-05 22:03:24 Amazon RDS Root CA: Amazon RDS eu-central-1 CA (#71) exp 2020-03-05 22:03:31 Amazon RDS Root CA: Amazon RDS eu-west-1 CA (#72) exp 2020-03-05 22:03:35 Amazon RDS Root CA: Amazon RDS sa-east-1 CA (#73) exp 2020-03-05 22:03:40 Amazon RDS Root CA: Amazon RDS us-east-1 CA (#67) exp 2020-03-05 21:54:04 Amazon RDS Root CA: Amazon RDS us-west-1 CA (#74) exp 2020-03-05 22:03:45 Amazon RDS Root CA: Amazon RDS us-west-2 CA (#75) exp 2020-03-05 22:03:50 Amazon RDS Root CA: Amazon RDS ap-northeast-2 CA (#76) exp 2020-03-05 00:05:46 Amazon RDS Root CA: Amazon RDS ap-south-1 CA (#77) exp 2020-03-05 21:29:22 Amazon RDS Root CA: Amazon RDS us-east-2 CA (#78) exp 2020-03-05 19:58:45 Amazon RDS Root CA: Amazon RDS ca-central-1 CA (#79) exp 2020-03-05 00:10:11 Amazon RDS Root CA: Amazon RDS eu-west-2 CA (#80) exp 2020-03-05 17:44:42

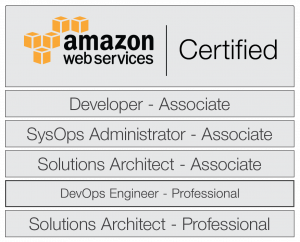

Today I went and sat yet another of the AWS Certifications; I’ve been doing a bit of a Pokemon approach and collecting them all.

Today I went and sat yet another of the AWS Certifications; I’ve been doing a bit of a Pokemon approach and collecting them all.