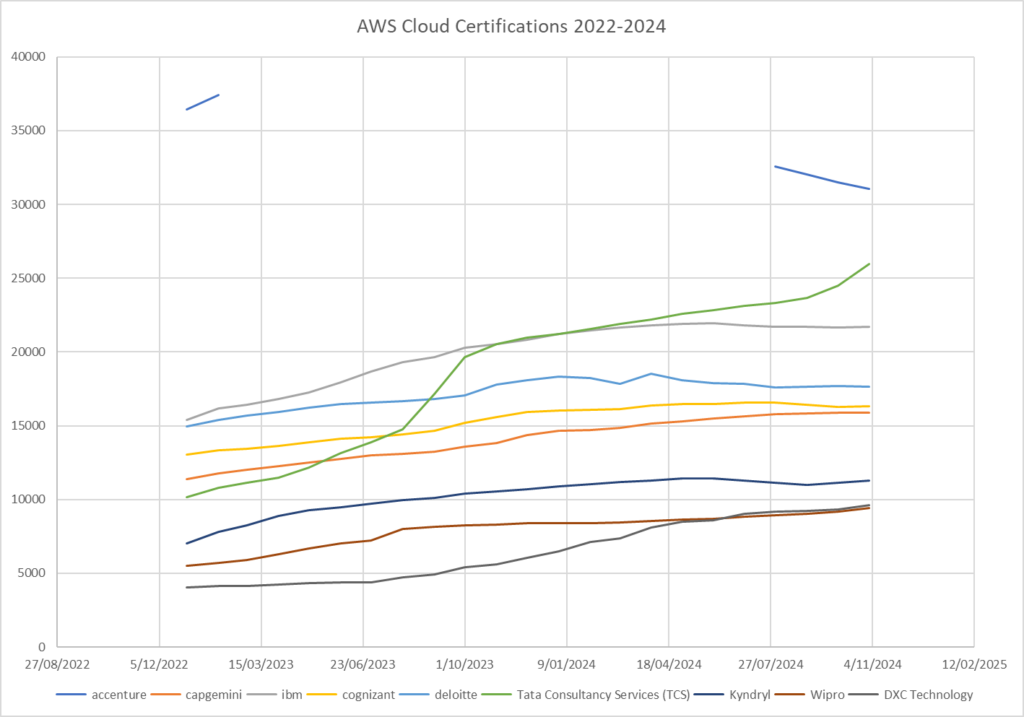

I track the capability of the consulting services partners by a number of attributes. Here is what the top 9 look like, by count of AWS Certifications, for the last two years until November 2024:

It appears that Accenture is ín danger of losing its dominant position as the worlds largest AWS Cloud consulting services partner, perhaps sometime in the next three to six months; TCS is accelerating up from what was 6th position in 2022, currently in 2nd position.

It appears that DXC was lagging the others, but has now caught back up.

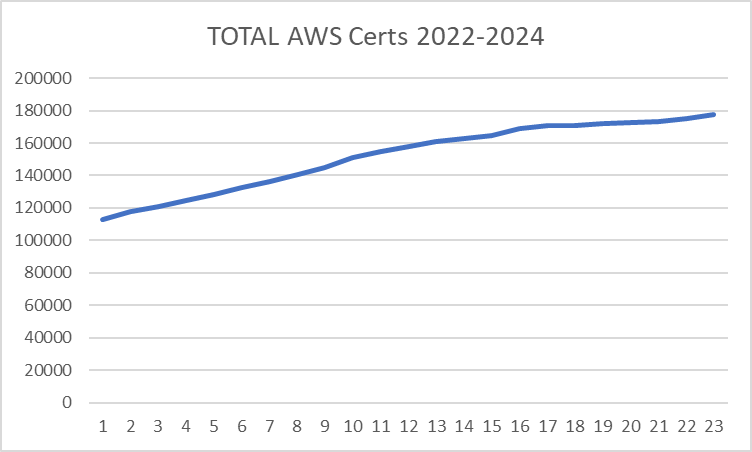

Now lets look at an aggregate total of the top 20 partners’ certifications, excluding Accenture (due to missing data), and see what the ecosystem growth is like:

This looks like there is continuing growth of the number of certs held, growing 57.3% over two years.

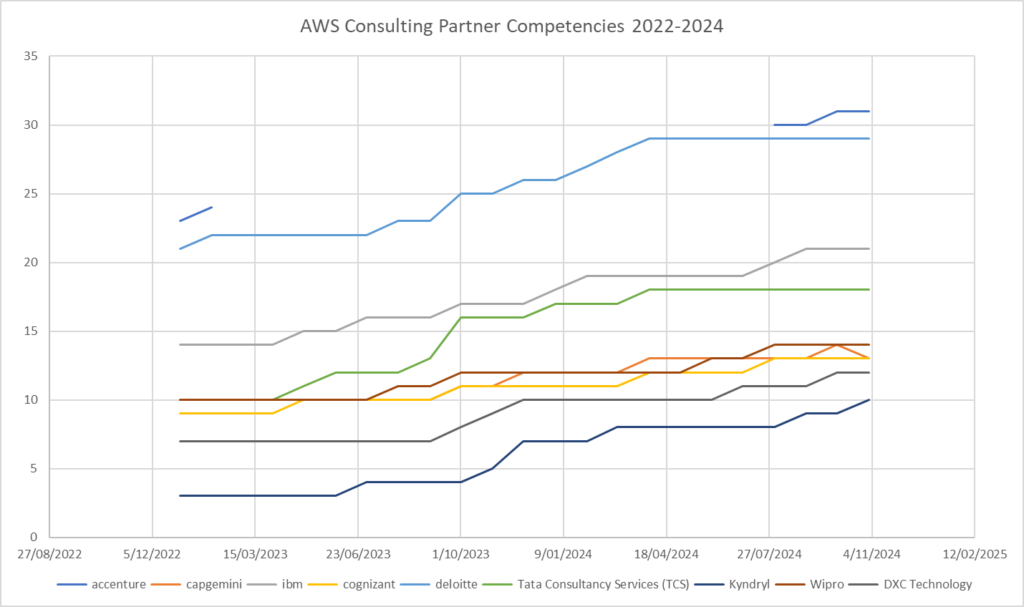

Looking at the AWS Partner Competencies achieved for those top 9 (by certifications held) :

I find it interesting to derive the focus and attention these organisations are placing on their AWS Cloud skills validation in their workforce.

For many of these, the long trail is the set of individuals who only hold the one certification, which often is the Cloud Practitioner – Foundational cert. This level of validation is aimed at being non-technical, often achieved by sales, marketing, and management folk who do not have the delivery capability to competently deploy a Lambda function or set a security group! However, its beyond my visibility to be able to remove this cert from the totals I can see. This means that the true delivery capability may be very different for these organisations.